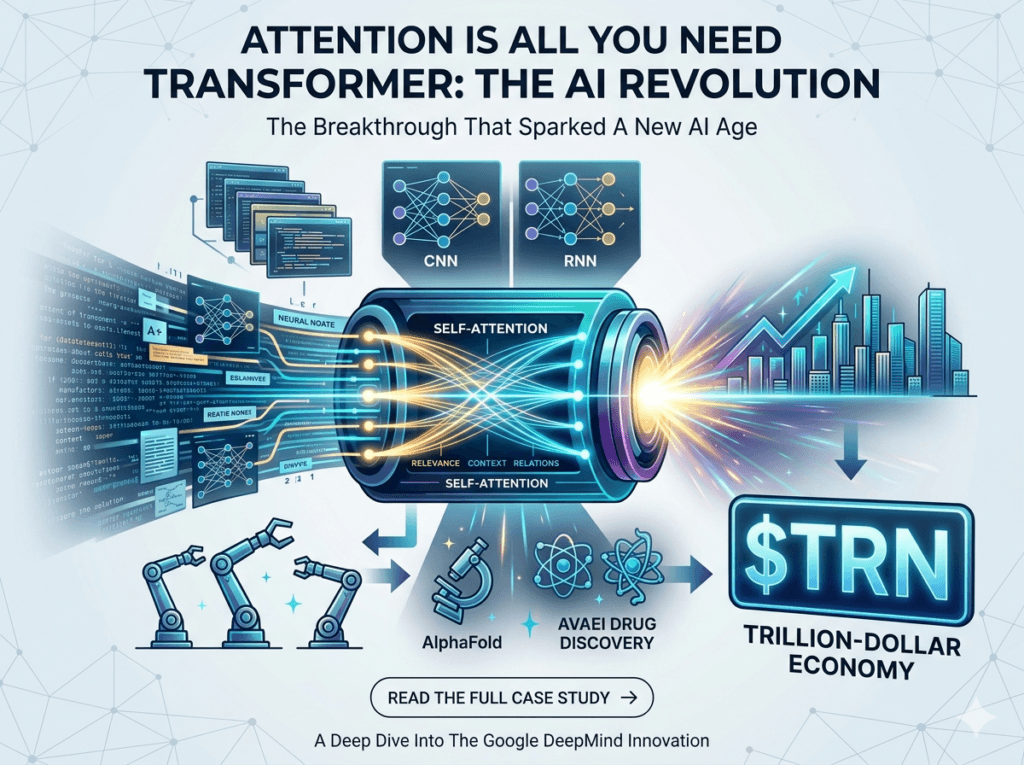

In June 2017, a research paper with the unassuming title “Attention Is All You Need“ was published. It didn’t come from a secret underground lab; it came from the Google Brain team. While the authors were simply trying to make language translation faster, they ended up building the engine for the next industrial revolution: the Transformer architecture.

1. The “Before” Era: Why RNNs Limited AI Progress

To understand the Transformer breakthrough, we have to look at how AI used to “read.” Before 2017, the industry relied on Recurrent Neural Networks (RNNs).

Imagine reading a 500-page novel, but you can only see one word at a time. To understand the current sentence, you must perfectly remember every word that came before it. By page 100, your “memory” of page 1 is a blur. This was the Sequential Processing problem:

- Bottlenecked Speed: Data had to be processed one step at a time, making it impossible to scale.

- Vanishing Gradients: AI struggled with “long-range dependencies”—it would forget the subject of a sentence by the time it reached the verb if they were too far apart.

2. What is the Self-Attention Mechanism?

The Google team proposed a radical shift: Stop processing words in order. Instead, let the AI look at the entire data sequence simultaneously and weigh which parts are most important. This is known as Self-Attention.

Think of a cocktail party. Even in a noisy room, your brain can “attend” to a specific voice while filtering out the background hum. That is exactly what a Transformer does with data—it identifies context and relevance instantly.

The Technical “Secret Sauce”

For the engineers, the core of this magic is the Scaled Dot-Product Attention formula. It allows the model to map “queries” (Q) against “keys” (K) to find the most relevant “values” (V):

Attention(Q,K,V)=softmax(dkQKT)V

This equation enabled parallelization. Because the AI no longer had to wait for the previous word to finish, we could finally harness the full power of modern GPUs.

3. From Research to a Trillion-Dollar Industry

The decision to open-source this paper triggered a global gold rush. The Transformer didn’t just improve translation; it created the foundation for GPT-4, Gemini, and Claude.

| Impact Area | The “Transformer” Effect |

|---|---|

| Computing Hardware | NVIDIA transitioned from a gaming brand to the world’s most valuable company by powering Transformer workloads. |

| Software & Coding | Tools like GitHub Copilot (built on Transformers) have increased developer productivity by an estimated 40-50%. |

| Science & Medicine | AlphaFold, which solved the “protein folding” mystery, is a direct descendant of the Transformer. |

| Economic Growth | Generative AI is projected to add up to $4.4 trillion annually to the global economy. |

Export to Sheets

4. The Innovator’s Dilemma: Why Google Shared the Crown

A common question in tech is: “If Google invented the Transformer, why is OpenAI leading the market?”

The answer lies in the Innovator’s Dilemma. While Google created the spark, the discovery was too massive to contain. The original authors eventually left to found AI giants like Cohere, Character.ai, and Essential AI.

However, by 2026, the landscape has shifted. By merging Brain and DeepMind into Google DeepMind, Alphabet has fully integrated Transformer architecture into its core “AI-first” ecosystem, from Search to multimodal Gemini models.

5. The Future: What Comes After the Transformer?

As we move toward 2027, the industry is evolving beyond basic “Attention”:

- Infinite Context Windows: Moving from reading paragraphs to processing entire libraries or hours of video in one “glance.”

- Physical AI (Robotics): The same logic used to predict the next word is now being used to predict the next physical movement in autonomous robots.

- Efficiency & Sparse Attention: New methods to achieve the same “intelligence” using 90% less electricity—critical for sustainable AI growth.

Final Thought: Architecture is Destiny

The story of “Attention Is All You Need” proves that in technology, how you process information is just as important as the information itself. We are no longer just building chatbots; we are building a new way to interact with human knowledge.

What do you think is the next big leap in AI architecture? Let’s discuss in the comments below.

#AI #Transformers #GoogleDeepMind #NVIDIA #TechInnovation #GenerativeAI #DataScience